Problem Overview | Deriving Geolocation from an Image

Recently, we undertook a prototyping and feasibility effort here at Mosaic using similarity learning. The goal of the project was to develop a solution that can geolocate an image or video to within 10s of square meters, and maybe up to 100 square meters.

That is, to identify GPS coordinates of the image anywhere in the world, using only the pixel data, because the camera metadata is not present. The images in this case would generally be found online somewhere, like social media.

The context would be something along the lines of security, peacekeeping, or emergency response. Organizations can generally do this manually, but it is very resource intensive and time consuming, and it might take a team of experts on the order of days or weeks to accomplish.

Similarity Learning | Prototyping

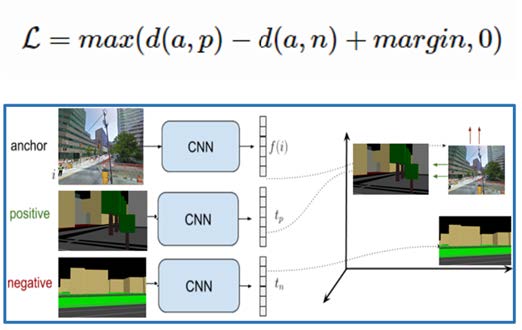

We decided to approach this problem as a similarity learning modeling effort. We used convolutional neural networks to train a model that takes an image or video as input and outputs a vector representation of the input, such that similar inputs will be close to each other in the vector space. The vector learning is driven by a triplet loss function.

The figure below should help describe the process, conceptually. In a nutshell, we organize input examples (images and OSM-derived renderings) as classes (GPS-heading), and construct triplets consisting of a similar pair (anchor-positive) and a dissimilar pair (anchor-negative).

Mosaic is working on a decision support tool called the Business Travel Risk Manager that will assist corporate travel managers and business travelers in making the best travel decision possible, given the constraints and information available. This tool will fuse detailed data from US air travel networks, public health epidemiology, and the latest COVID-19 research into a single source of information that businesses can leverage.

Image reference

Because there is not really image data that covers the entire populated world, but there is structured, descriptive data (terrain, topo, etc.), we must map the query image vector to its corresponding structured data vector that we do have access to. To do this, we render OpenStreetMap data into an image. The figure directly below represents this general process – with sets of image data with a GPS-heading, then pull the OSM data for that GPS location and render it with the same heading as the image.

We did find the similarity learning approach to be incredibly accurate at finding similar images, and this sort of algorithm is well suited to return image searches. We are still tuning this to solve for geolocation.

Some key benefits of Similarity Learning in general, include:

- It can handle multi-modal input – e.g. you can train image and text into the same embedding space, or train images into an existing text embedding space (from a pre-trained model), as long as all input is labeled under the same schema.

- In a classification setting, it can handle virtually any number of classes, since it is not constrained by a Softmax activation.

- Once trained, you can easily add new classes without retraining the entire model, simply by passing in examples of the new class to obtain their embeddings, and then storing them for lookup and comparison at query time.

A Similar Geolocation Effort

There have been several attempts at similar image geolocation problems, using machine learning in the last several years. Here is a recent approach:

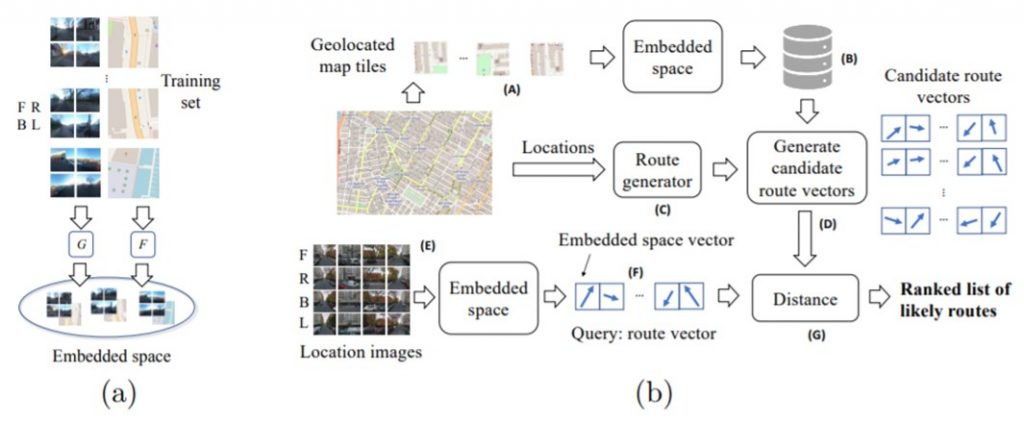

In July 2020, researchers in the UK approached geolocation in similar way to the Mosaic prototype described above. Notably, they used the same data as us to build their training set – Google Street View and Renderings of OpenStreetMaps.

Their constraints, however, were a bit more relaxed than ours. For each location they used 4 images, capturing each direction (roughly N, S, E, W), and mapped that “panorama” to an aerial OSM rendering centered on the GPS location. Since they were mapping a ground level image to an aerial image, they used a Siamese network approach to handle the different mediums, but still trained with a variant of triplet loss.

Then at query time, they required a sequence (or route) of evenly spaced panoramas up to 200 meters in length to achieve their Top-5 accuracy ranging from ~75% with route length of 5 (50m) to ~100% with route length of 20 (200m). Accuracy in this case refers to identification of a ~100 square meter area.

A Similar Geolocation Effort

Geolocation use cases have come under fire recently due to their applications in targeted advertising, but the ability to accurately locate videos/images goes far beyond boosting sales growth. Alternatively, the ability to return a set of similar images can save users lots of search time.

Aggregated location data can help city planners ease traffic problems, health officials identify disease patterns, and government agencies monitor pollution levels.

Commercial uses of this information could include inventory control and fleet management, location planning, and geofencing. An aviation operator could map out the real time locations of their assets and match that with weather information to improve routing decisions. The same aviation operator could geofence its assets, to alert decision makers of planes traveling in and out of certain geographical zones.

Artificial intelligence enables humans to accurately discover the location of themselves and items of interest in milliseconds. Mosaic is primed to partner with any company to start taking advantage of this technology.