Take Our Content to Go

Introduction

Current interplanetary surface exploration depends on human teleoperation of robots, which helps ensure the rover’s safety, but also limits operations since direct line of sight with communications equipment on Earth is needed. This may limit the amount of the surface of the planetary body the rover can cover over the course of its mission. For example, the Volatiles Investigating Polar Exploration Rover (VIPER) is expected to cover up to 20 km during its 91-day mission.

In deep space missions, the level of complexity, communication delay, and limited resources increase the stakes for humans responsible for automated system performance. Appropriate calibration of trust is critical to ensuring the granting of autonomy is done safely and effectively. Unfortunately, many machine learning (ML) models are black boxes, with the inner workings difficult for users to understand. Explainability provides insight into why a machine learning model arrived at a given result.

Furthermore, ML models provide a prediction, without a quantifiable measure of uncertainty, it can be difficult to ascertain the confidence in the result. Quantifying the uncertainty in ML model outputs, based on how closely related a certain set of inputs is to the training data, can provide a measure of model trustworthiness. A decision support system can use this measure to select actions to take autonomously, to recommend actions to a human user, and/or to present information that can inform a human user’s decision-making.

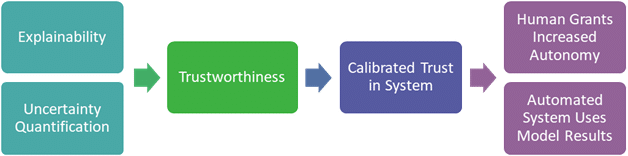

Allowing users to assess the trustworthiness of a given output can, in turn, increase the user’s trust in the system. Figure 1 illustrates how improved explainability, coupled with uncertainty quantification (UQ), can measure an automated system’s trustworthiness, allowing its users to appropriately calibrate their trust in the automated system and determine when it is appropriate to grant the automated system increased autonomy.

Mosaic ATM’s decades-long history of AI and ML engagements with federal aerospace and aviation organizations made us the ideal partner for this project. The company’s scientists, engineers, and subject matter experts – many recognized leaders – possess strong operational knowledge of the National Airspace System and operations research to deliver powerful results. With a strong roster of NASA SBIR projects under its belt, the Mosaic ATM team was well-positioned to assist with the lunar surface exploration rover project.

ELSE – Explanations in Lunar Surface Exploration

Mosaic has developed several techniques to support explainable ML, including Explainable Basis Vectors (EBVs), a generalizable approach to creating explanations and quantifying uncertainty in ML model output. The generalizability of these EBVs and UQ approaches can support a host of input data modalities. For example, Mosaic previously used these methods to monitor and manage crew well-being via images of facial expressions.

The approach, called the Explanations in Lunar Surface Exploration (ELSE), uses imagery data from rover hardware as well as potential other modalities such as calculations based on the images, including slope, slippage, and forecast shadow movement.

With the ELSE system, Mosaic applies the EBV method to robotic traversability judgment: the ability of a robot, like a lunar rover, to determine whether a given region of terrain can be safely navigated. Our methodology will assist the rover not only in assessing the traversability of a given region of terrain but also assessing the trustworthiness of the traversability judgment. Such assessments of trustworthiness will support increasingly autonomous operations, which will be required for persistent operations on the moon and beyond.

The ELSE prototype will provide explanations for the ML model results in a form that can be interpreted by an automated rover system, supporting increasingly autonomous operations. The explanations will also be provided in a form that will allow them to be interpreted by a human user, both to support remote operation of the robot and to support human assessment of the information provided by the explanation. This UI component will display explanations and UQ assessments in a human-digestible format.

The EBV and likelihood scores methods will contribute to successfully implementing autonomous systems to support deep space exploration, in line with efforts within NASA’s Exploration Systems Development Mission Directorate (ESDMD) like Moon to Mars. This effort explicitly addresses or supports several Moon to Mars objectives. The ELSE architecture also makes the system applicable to other robotics applications in various domains. There is a robust research and development community focused on terrestrial rover navigation and a community of practitioners proposing methods for explainability in human-robot interaction, automated driving, and planning.

Machine Learning Approach and Dataset

The ML problem for this reference case is image segmentation. Given an input image, we want to classify regions of an image corresponding to relevant and potentially hazardous terrain, such as rocks and craters. Additionally, we will estimate other aspects of the terrain, such as slope.

To solve this problem, Mosaic uses the POLAR (Polar Optical Lunar Analog Reconstruction) Stereo dataset, a set of images taken in a regolith lab to simulate terrain and optical conditions at the lunar polar regions, including Lidar point clouds for ground truth. The images are of a regolith test bin produced at the NASA Ames Research Center. The terrain is placed in various configurations in the regolith bin, and images capture the terrain from various camera positions and lighting conditions. Lighting is crucial, as polar regions often have extreme solar angles with harsh shadows. While the dataset itself is relatively small for deep learning (about 5,400 images), we can augment the images to increase the variability of the training data, and use transfer learning to start with a pre-trained segmentation model.

Explainable Basis Vectors – EBVs

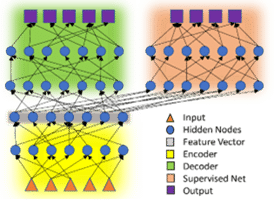

The EBV method decomposes the deep learning problem into three sub-models: (1) an encoder (ResNet101); (2) a decoder consisting of several ‘deconvolution’ or ‘ConvTranspose2d’ layers, which takes the output of the encoder and attempts to reconstruct the input image; and (3) a supervised branch that classifies the image. Figure 2 illustrates the model architecture, showing the encoder in yellow, the decoder in green, and the supervised classification network in orange. This method is described in detail in two published papers, where it is applied in the domains of air traffic departures and landings prediction and in facial emotion recognition.

These feature vectors are vector encodings of each image and contain information on the features contained in them. There are several things that can be done with these vectors to gain better insight into both the data set and the model. For example, we can group the feature vectors by label and average them to obtain features prominent to each class and feed these averaged classes through the decoder network to visualize them.

There are a few motivating factors for using the approach outlined above. First, decomposing the feature vectors (the output of an encoder network) can lead to a more feature-rich space than PCA on the original input space, leading to better clustering of the principal components than the original vectors. Secondly, we can use the principal components to provide explanations for a single prediction. This is done using an algorithm such as SHapley Additive exPlanations (SHAP). Since SHAP struggles to scale, we can use PCA and k-means clustering to essentially reduce the data size.

Model Uncertainty Quantification

As with any function not derived from first principles or even hand-crafted features, deep learning models cannot be trusted when presented with data that is significantly different from the training data set. In this case, the model moves from interpolation to extrapolation and the resulting model output is often worse than a random guess. To quantify model uncertainty, we employ a measure to calculate how similar the current image is to the training data set.

We first pass the feature vectors through the supervised sub-model except for the last layer. This involves calculating the average distribution of training data near this sample. Using this UQ output, we can obtain a numeric measure of the model’s familiarity with a particular input sample, based on how the model “behaves” when the input sample passes through the model.

Conclusion

Mosaic is developing the Explanations in Lunar Surface Explanation (ELSE) system to aid in robotic surface navigation, with a focus on the domain of lunar rover traversability. Most computer vision models lack transparency, which limits how well human users can calibrate their trust in these automated systems. Lunar surface traversal is currently a highly manual process, but increased trust (and more accurate trust calibration) will allow for safely granting autonomy to such systems. More broadly, explainability in deep learning provides insight into model behavior, allowing us to view deep learning models as more than just a “black box.”

ELSE explanations and uncertainty information will provide additional context to human users. With ELSE, human drivers of remotely operated vehicles will gain new insights relating to the behavior of deep learning models that they depend on, and increased trust in their ability to operate more and more autonomously.

Looking ahead, Mosaic is also building a physical rover for evaluation. The design comes from NASA JPL’s open-source rover, available on GitHub. With our own rover, Mosaic will be able to quickly iterate on ELSE’s development and testing by having full control of the rover’s hardware and software. Additionally, this will improve and simplify ELSE performance evaluations.